Your AI Metrics Are Lying to You: Three Shifts Leaders Must Make Now

Most organizations believe they are making progress on AI.

The dashboards look healthy. Usage is up. Licenses are deployed. And by conventional measures, adoption is succeeding.

But here is the problem: usage does not equate to understanding. And understanding is what actually predicts value.

Think about a gym membership. Someone checking in every day is a metric. Whether they are getting stronger is a different question entirely. You can show up, go through the motions, and leave with nothing to show for it. The same is true for AI. Interaction is not transformation.

That gap between adoption and actual value creation is where most organizations are losing ground right now. And closing it requires three deliberate leadership shifts that most companies have not yet made.

1. Stop Measuring Means. Start Measuring Outcomes.

The easiest trap in AI strategy is optimizing for the wrong endpoint.

Productivity percentages, time saved per task, usage rates. These are means metrics. They tell you something is happening. They do not tell you whether it matters. And the data backs this up: according to Deloitte's State of AI in the Enterprise 2026, only one third of organizations use AI to deeply transform by creating new products or reinventing core processes, while 37% use it at a surface level with little change to how work actually gets done.

I have seen this pattern before in cloud technology while I built the Cost Optimization Practice at Google. Cost optimization became the North Star for many organizations, and the fastest way to reduce cloud spend was to turn infrastructure off. Instant savings. Wrong outcome. The metric became the mission, and the actual mission got lost.

AI adoption is heading in the same direction.

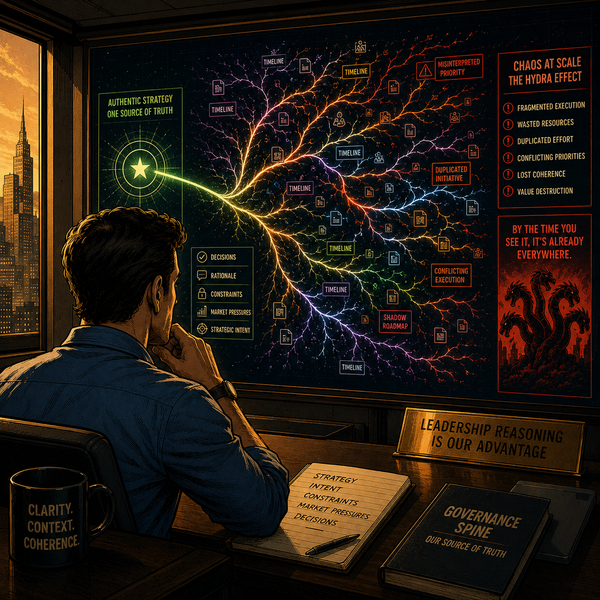

The metric that actually predicts transformation is subtraction. What have you stopped doing? What process no longer exists because you built something better? What decision no longer requires three approval layers because an agent handles the routing?

I wrote about this in The Executive Playbook for AI Structural Change: every quarter, your leadership team should be able to answer what did we eliminate? If nothing has been retired, nothing has transformed. You are digitizing existing complexity, not removing it.

Build your success metrics around what you have made obsolete. That is the signal that AI is actually working.

2. Leadership Is Not a Sponsor. It Is a Standard.

Here is the uncomfortable truth: if your executives are not exemplary users of AI, your organization will not be either.

Not casual users. Not curious observers. Exemplary. Fluent. Operating at speed.

Leadership sets the behavioral standard whether they intend to or not. If the people at the top are still running their workflows the same way they did three years ago, every layer beneath them will find a way to rationalize doing the same. DHR Global's 2026 Workforce Trends Report found a growing disconnect between how quickly AI adoption advances and how clearly leaders communicate what that progress means for people's roles and careers. When communication lags behind adoption, uncertainty fills the gap.

This is not about seniority. It is about capability. The knowledge and experience a senior leader carries is irreplaceable, but only if they can translate it into executable insight at the velocity modern AI demands. Wisdom without fluency is a bottleneck.

The prescription is direct: make space in your executives' workdays for genuine AI education. Not a half day workshop. Not a quarterly briefing. Structured, recurring, hands on practice that builds real fluency over time. If the calendar will not allow it, the calendar needs to change.

The organizations that win the next five years will be those where the people setting strategy are also the most capable operators in the room.

3. Your Individual Contributors Need to Think Like Executives.

This is the shift most organizations are least prepared for, and it is the most important one.

In the industrial model, efficiency came from segmentation. Narrow roles. Defined swim lanes. People optimized for a specific slice of the workflow. That design made sense for a certain era. It does not map to an agentic future.

As agents take over coordination, routing, reporting, and pattern recognition, the humans left in the loop are not there to execute tasks. They are there to judge outcomes. To catch what the agent missed. To make the call when confidence thresholds are not met. To connect decisions across domains that a model can process but cannot truly understand.

According to MIT Sloan Management Review and Boston Consulting Group's joint research on the emerging agentic enterprise, strategic oversight, ethical governance, and the ability to orchestrate human and AI teams are becoming the most critical human skills as AI agents handle tasks previously performed by human workers.

That requires a fundamentally different approach to developing your people.

Your individual contributors need broad access to data, not just the slice relevant to their role. They need contextual understanding of how decisions in one function ripple into another. They need practice making judgment calls, not just following playbooks. And critically, they need to be trusted with that authority now, before the agentic infrastructure is fully in place, so they are ready when it is.

I explored the shape of this role in The Operator Model for an Agentic Future and the cross functional mindset it demands in The New Pirate Age. The point worth reinforcing here is that developing these people is not a training initiative. It is an infrastructure investment. If your individual contributors are still operating in narrow, task segmented roles today, they will not be equipped for the cognitive demands coming in the next 18 to 36 months.

The Window Is Narrowing

Microsoft's Work Trend Index found that 82% of leaders say 2025 was a pivotal year to rethink strategy and operations, and 81% expect agents to be moderately or extensively integrated into their AI strategy within 12 to 18 months. That window is not a forecast anymore. It is a countdown.

Organizations that redefine their success metrics now will have the feedback loops to actually improve. Organizations where leadership models genuine fluency will see it cascade. Organizations that develop their individual contributors for systems level thinking will have the human infrastructure to deploy AI at scale without losing accountability.

The ones that do not will find themselves with impressive usage dashboards and very little to show for them.

The future does not reward adoption.

It rewards readiness.

Start there.