The New Playbook: The Operator Model for an Agentic Future

The way we design teams today assumes a set of constraints that no longer exist.

We still optimize for coordination, alignment, and information flow as if humans are the only systems capable of synthesis. Meetings are the mechanism for alignment. Headcount compensates for uncertainty. Collaboration compensates for missing information.

Agentic systems change all of that.

When data is mapped, workflows are observable, and AI can retrieve, reason, simulate, and act, the limiting factor is no longer coordination.

It’s judgment.

The Operator

At the center of the future team is the Operator — the human with deep domain expertise and earned wisdom. Not just someone who knows what to do, but someone who understands why a decision was made, where the real tradeoffs live, and how failure modes manifest.

This role is not purely technical or managerial. It is a hybrid. The Operator:

- Executes within deep domain contexts

- Uses AI as a reasoning and leverage layer

- Understands structured data, agentic workflows, and automation

- Translates intuition into scalable execution

They are directly accountable for outcomes and for shaping how AI is applied in service of those outcomes, with technical partners augmenting scope — not replacing judgment.

Fewer Humans. Higher Leverage.

The Operator does not need a large team.

The old model required many people to:

- Move updates

- Align perspectives

- Track metrics

- Coordinate across domains

Agentic systems now absorb much of this continuous coordination.

Teams shrink. The humans that remain exist not to coordinate but to learn and to judge.

This is intentional design, not accidental.

Mentees, Not Contributors

Humans who work alongside Operators function like doctoral candidates in residency: they assist where human judgment still matters, but their primary role is learning how to think with context.

This is structured exposure, not shadowing.

Over time these mentees become Operators themselves — capable of anchoring new domains, subfields, and areas of capability as organizations expand.

Expertise is fragmenting. Deep mastery is found in narrower slices of complexity. Organizations will need more Operators across more specialties, not broader teams of generalists.

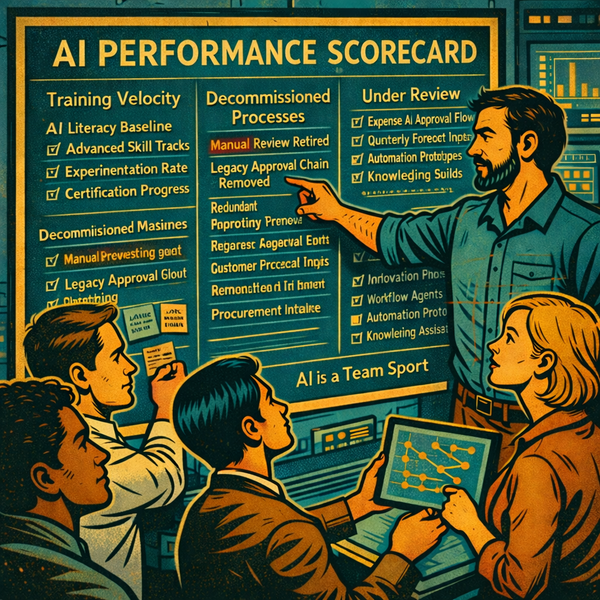

Teaching Judgment Explicitly

In the Operator model, mentorship is a first-class responsibility.

Operators are accountable for:

- Outcome delivery

- Quality of decisions

- Development of future Operators

That means teaching why decisions were made — explaining prioritization, interpretation, tradeoffs, and how to think through ambiguity.

Metrics must align to both project success and human development. Knowledge transfer isn’t culture — it’s infrastructure.

Why Traditional Team Models Break

Most organizations still default to:

- Generate an idea

- Assemble a large team

- Schedule meetings to align

- Use humans to coordinate and report

This is expensive, slow, and redundant.

AI now acts as a collaboration partner. It can surface risks, simulate outcomes, maintain state, and connect information over time without meetings. If data structures are intentionally designed, humans no longer need to be in the loop just to keep work synchronized.

The future model assumes: - Fewer humans in the loop

- Higher-caliber humans in critical roles

- Automated coordination and reporting

- Humans focused on judgment, not logistics

The result: less overhead, fewer meetings, faster decision velocity.

Where This Model Applies

This isn’t a beginner construct.

The Operator model applies to organizations in advanced or scaling stages of AI adoption, where:

- Data is indexed and accessible

- AI is embedded into workflows

- The goal is leverage, not experimentation

Most organizations have not fully embraced this yet. But this is the endpoint they are moving toward — whether they have named it or not.

Teams of the future will not be larger.

They will be sharper.

And the defining question will no longer be “How many people do we need?”

It will be:

Who holds the judgment? And who are we training next?